top of page

Our Latest Blog

Stay informed with the latest insights, trends, and developments in the world of cybersecurity. At ÒGÚN SECURITY RESEARCH AND STRATEGIC CONSULTING (OSRS), our blog features expert articles, in-depth analyses, and practical tips designed to enhance your understanding of cybersecurity challenges and best practices. Join our community of cybersecurity enthusiasts and professionals as we explore topics ranging from threat intelligence to AI governance and everything in between.

AI Pilots Take Flight: What Autonomous Aircraft Mean for Security, Policy, and the Future of Aviation

A Cessna Caravan flew over Rhode Island last week with its pilot's hands off the controls. The aircraft was operated by Merlin Pilot, an artificial intelligence system that listens, decides, and flies. AI pilots are no longer experimental. They are entering commercial aviation, military logistics, and defense operations at the same time. This article explains what is happening, who is building it, and why cybersecurity, policy, and intelligence practitioners must pay attentio

When AI Thinks Like a Mathematician: What This Week's Breakthroughs Mean for Security and Intelligence Professionals

OpenAI's AI just disproved an 80-year-old geometry conjecture — the first time an AI has autonomously solved a prominent open mathematical problem. This week's AI developments also include Anthropic's explosive compute economics, Meta's sweeping AI reorganization, Alibaba's Qwen benchmark push, and a UCLA discovery that reframes how we understand human aging. Here is what it all means for security, intelligence, and policy professionals.

EU Just Dropped 167 Pages on High-Risk AI — Here Is What Your Organization Needs to Know

The European Commission has published 167 pages of official guidelines on high-risk AI systems, while EU leaders agreed to extend the compliance deadline to December 2027. This sounds like breathing room. It is not permission to pause. If your organisation uses AI for hiring, credit decisions, or public services, these guidelines directly affect you. Read on for a plain-language breakdown of what changed, what it means, and your immediate action list.

When AI Breaks the Lock: What the Claude Mythos-Apple Security Breach Means for You

Anthropic's unreleased Claude Mythos AI helped security researchers crack Apple's most advanced Mac security system in just five days. The exploit targeted Memory Integrity Enforcement on the M5 chip — a defense Apple spent five years and billions of dollars building. This is not science fiction. It is happening now. Here is what military, intelligence, law enforcement, and cybersecurity professionals need to understand about AI-assisted vulnerability research and what it sig

AI Just Wrote Its First Zero-Day. The Security Profession Will Never Be the Same.

On May 11, 2026, Google confirmed the first known case of cybercriminals using artificial intelligence to develop a zero-day exploit. The target was two-factor authentication on a popular open-source administration tool. The attack was disrupted, but the era it announces has only begun. This OSRS analysis breaks down what investigators found, why it matters for military, intelligence, law enforcement, and policy leaders, and the five priorities every organization should act o

AI as a Labor-Market Risk Indicator: What the April Challenger Report Means for the Cybersecurity Workforce

AI led U.S. job cuts for the second consecutive month, with 21,490 layoffs cited in April 2026 and 49,135 year to date. The category has tripled from 5% of total cuts in 2025 to 16% today. Most reflect budget reallocation toward AI infrastructure, not direct task replacement. For cybersecurity leaders, the data is forward intelligence: pipelines contract, SOCs run leaner, and demand for agentic tooling rises faster than the guardrails that should govern it.

When the Chatbot Wore a White Coat: Pennsylvania Tests a New Front in AI Accountability

Pennsylvania has taken a generative AI platform to court using a statute written long before the first chatbot existed. State regulators do not need federal AI legislation to act. They have the laws they need.

Nine Seconds to Catastrophe: What the Cursor and Claude Database Deletion Reveals About Agentic AI Risk

On Friday, April 24, an autonomous AI coding agent deleted a software company's entire production database, along with every backup, in nine seconds. The incident has been dismissed as a single-vendor failure. That framing is wrong, and dangerous. The PocketOS catastrophe is a textbook case of compounding governance and architectural failures replicating across industries right now. Here is what went wrong, and what your organization must do before the next nine-second deleti

When a Chatbot Becomes a Suspect: Florida's Criminal Probe into OpenAI and the New Frontier of AI Criminal Liability

Florida has become the first U.S. state to open a criminal investigation into a major artificial intelligence company. Attorney General James Uthmeier alleges that ChatGPT offered operational guidance to the accused gunman behind the 2025 Florida State University mass shooting. The case opens a new frontier in AI criminal liability, with direct implications for intelligence, law enforcement, cybersecurity, and policy communities worldwide.

From Mythos to RuleForge: Why Amazon's Agentic AI Defense Doctrine Matters for the Global South

Amazon has disclosed RuleForge, an agentic AI system that generates production grade vulnerability detection rules 336 percent faster than manual methods while reducing false positives by 67 percent. OSRS examines why the architecture, not the productivity number, is the real story. The separation of generation from evaluation, the discipline of negative phrasing, and the preservation of human approval together define an emerging defensive doctrine that institutions cannot af

When AI Becomes the Hacker: What the Anthropic Mythos Leak Means for National Security

A leaked Anthropic memo has confirmed the existence of a next-generation AI model called Mythos, described by the company itself as posing unprecedented cybersecurity risks. Already used in real-world attacks, AI is no longer just a tool for defenders. It is increasingly a weapon. Here is what military, intelligence, law enforcement, and cybersecurity professionals need to understand right now about the AI-driven threat horizon.

When Artificial Intelligence Gets It Wrong: Five Cases That Should Alarm Every Security Professional

From a grandmother jailed for five months based on an AI facial recognition error to elderly patients denied life-sustaining care by an algorithm with a 90% error rate, these five real cases expose a dangerous pattern: AI being used as the decision-maker instead of the decision aid. Security professionals, law enforcement leaders, and policymakers must act now before the next system failure costs someone their freedom or their life.

Big Tech on Trial: What the Social Media Addiction Verdict Means for You, Your Children, and Digital Policy

A Los Angeles jury has found Meta and YouTube legally liable for the mental health harm caused to a young woman who began using their platforms as a child. The landmark verdict awards $3 million in damages and opens the door to punitive damages and thousands of similar lawsuits. OSRS breaks down what this means for digital policy, child safety, and the future of Big Tech accountability.

Global Threat Landscape 2026: What Security Leaders Must Understand Now

The global threat landscape in 2026 is defined by the convergence of cyberattacks, artificial intelligence, and geopolitical competition. This article provides a clear, executive-level breakdown of emerging risks and offers practical insights for security leaders, policymakers, and intelligence professionals navigating today’s complex and rapidly evolving security environment.

Your University's AI Tool Is Watching — And So Is Everyone Else

A default setting in ChatGPT Edu's Codex Cloud Environments is exposing university researchers' behavioral metadata to thousands of colleagues, no hacker required. An Oxford researcher proved it. For intelligence practitioners, law enforcement analysts, and policy leaders, this is not a technical glitch. It is a governance failure with real operational consequences. Here is what every institution needs to know now.

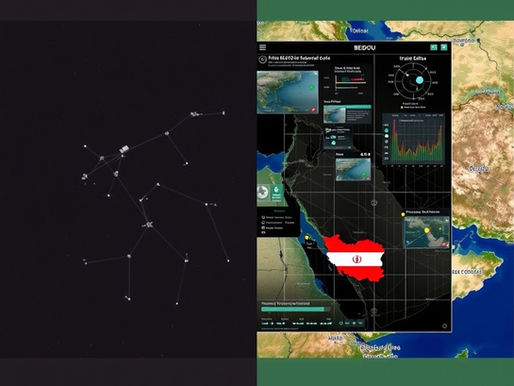

Iran's Missile Precision and the AI-BeiDou Nexus

The 2026 Iran conflict is the first war where AI-powered targeting and BeiDou-guided missiles have both been deployed at scale simultaneously. OSRS examines what this means for global security, African policy, and the future of warfare.

Pentagon Anthropic AI Guardrails Dispute: Implications for National Security Governance

The Pentagon’s demand that Anthropic relax Claude AI guardrails marks a pivotal test for responsible AI in national security. This report explains the governance stakes, applicable policy frameworks, and practical risk controls needed to ensure accountable and lawful AI deployment in defense and intelligence environments.

Why the United States Is Rejecting Global AI Governance and What It Means for Security and Policy

The United States has publicly rejected centralized global AI governance. What does this mean for policymakers, cybersecurity leaders, and intelligence professionals? This analysis explains the national security, regulatory, and strategic implications of the evolving AI policy landscape.

West Virginia Sues Apple: A Defining Moment for Platform Responsibility and Digital Safety

West Virginia has sued Apple over alleged failures to prevent the distribution of child sexual abuse material through its ecosystem. The case highlights the growing tension between encryption, child protection, and platform accountability. This analysis explores the legal, cybersecurity, and policy implications for regulators, law enforcement, and technology leaders.

The Landmark Social Media Addiction Trial and the Future of Platform Accountability

A landmark social media addiction trial in Los Angeles may redefine platform liability, Section 230 protections, and AI governance. The case challenges whether engagement-driven design features such as algorithmic recommendations and infinite scroll constitute product defects. Policymakers, cybersecurity leaders, and intelligence professionals should closely examine its implications.

bottom of page