From Mythos to RuleForge: Why Amazon's Agentic AI Defense Doctrine Matters for the Global South

- Oludare Ogunlana

- Apr 17

- 4 min read

When Amazon disclosed that its RuleForge system now generates vulnerability detection rules 336 percent faster than human analysts, it did more than publish a productivity number. It quietly released a defensive doctrine for the agentic AI era, one that every Chief Information Security Officer, regulator, and national security planner outside the hyperscaler club should study carefully.

The 48,000 CVE Problem

In 2025, the National Vulnerability Database published more than 48,000 new Common Vulnerabilities and Exposures. That number is not a plateau. It is a floor. Automated discovery tools, AI-assisted fuzzing, and the growing willingness of adversaries to weaponize disclosures within hours of publication have permanently changed the defender's problem.

The old workflow, in which a skilled analyst studied proof-of-concept code, wrote detection logic, tuned queries against traffic logs, and submitted the rule for peer review, produced excellent rules. It cannot produce enough of them.

What RuleForge Actually Does

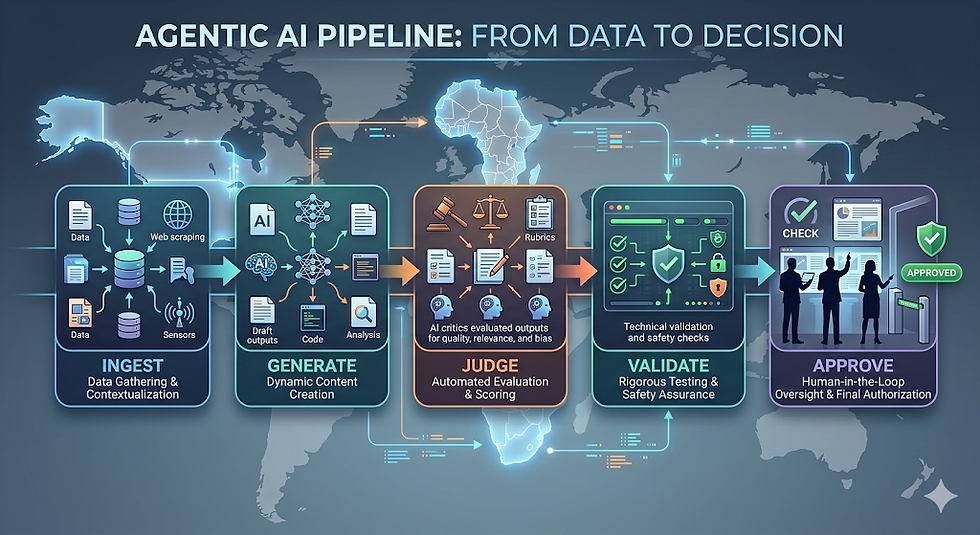

RuleForge is an agentic AI system deployed inside Amazon Web Services that turns publicly disclosed exploit code into production-grade detection rules applied to traffic captured by Amazon's MadPot honeypot network and the internal Sonaris detection system. Its architecture is deliberately decomposed into five stages:

Ingestion and prioritization. The system downloads available proof-of-concept code for new vulnerabilities and scores each exploit using content analysis and threat intelligence, focusing effort on the threats that matter most.

Parallel rule generation. Multiple candidate rules are generated simultaneously on AWS Fargate with Amazon Bedrock, each refined over several iterations.

AI judge evaluation. A separate judge model, not the generator, scores each rule on sensitivity and specificity using domain-specific prompts.

Multistage validation. Rules are tested against synthetic malicious and benign inputs, then against live traffic logs from MadPot and comparable sources.

Human approval. A security engineer reviews the best-performing candidate before any rule is deployed to production.

The Doctrine Hiding Inside the Architecture

Three design choices deserve wider attention than the headline productivity figure.

Generation and evaluation must be separated. When Amazon asked the generation model to rate its own output, the model approved almost everything it produced. This is not a bug. It is a property of large language models in adversarial domains. Introducing a dedicated judge model reduced false positives by 67 percent while preserving true positive detections. The architectural primitive that makes agentic AI safe enough for security operations is the refusal to let generators grade their own homework.

Negative phrasing produces honest calibration. Asking a model to estimate the probability that a rule will fail to catch a malicious request yields better calibration than asking whether the rule correctly catches all malicious requests. Given the well-documented tendency of language models toward affirmation, framing evaluation as a search for problems is not a prompt trick. It is a discipline.

Domain-specific prompts outperform generic confidence queries. Asking a model whether a rule targets the vulnerability mechanism itself or a correlated surface feature encodes what security engineers actually look for. Generic "rate your confidence" prompts do not.

Why This Is a Response to Mythos

The Mythos incident and related reporting have made clear that capable adversaries are already using agentic AI to accelerate reconnaissance, exploit synthesis, and payload deployment. A defender community restricted to manual authorship cannot match that tempo. RuleForge is the first public, production-grade demonstration that agentic AI on the defender's side is feasible, measurable, and disciplined by human review.

The asymmetry between offense and defense in the agentic era is real, but it is not fixed. The Amazon disclosure shows that the gap is closable with architectural discipline.

Why It Matters for Nigeria, West Africa, and the Global South

The harder question is who gets to close that gap. Agentic defensive AI of the RuleForge class requires cloud scale compute, access to frontier foundation models, mature telemetry infrastructure, and cadres of engineers capable of supervising the pipeline. These inputs are unevenly distributed.

Nigerian financial services and fintech operators face the same adversaries Amazon faces, but without the defensive multiplier.

West African critical infrastructure operators in power, water, telecommunications, and oil and gas must assume that adversaries will weaponize new Common Vulnerabilities and Exposures faster than any manual detection team can respond.

Regional governments and regulators should recognize that the cyber defense divide will widen faster than the cyber offense divide, because offensive agentic AI is cheaper to access than the telemetry and engineering depth required for defensive agentic AI.

This is a strategic problem, not merely a technical one. Pooled regional detection engineering through ECOWAS or African Union cybersecurity frameworks is no longer a convenience. It is a necessity.

Six Recommendations

Require architectural separation between generator and judge components in any AI-assisted detection tooling.

Preserve human approval gates in all agentic defensive pipelines.

Build telemetry and honeypot infrastructure before building agents. Agentic tooling cannot compensate for absent telemetry.

Pool regional detection engineering capability where individual institutions cannot justify independent pipelines.

Codify governance of agentic defensive AI in national and regional frameworks, including data residency, liability, and audit requirements.

Update cybersecurity curricula to cover prompt engineering for security, judge model design, and agentic pipeline supervision.

The Takeaway

RuleForge is not a product. It is a doctrine statement. The organizations that read it as such, and that internalize the separation of generation from evaluation, the discipline of negative phrasing, and the non-negotiability of human review, will be the ones still standing when the next wave of AI-assisted intrusions arrives. The organizations that treat it as a Silicon Valley curiosity will not.

Partner with OSRS

OGUN Security Research and Strategic Consulting LLC provides intelligence-led advisory services for institutions navigating the agentic AI security transition, including defensive architecture review, AI governance frameworks, and executive briefings for boards and regulators. Visit www.ogunsecurity.com or contact our team to begin a conversation.

ABOUT THE AUTHOR

Dr. Sunday Oludare Ogunlana is Founder and CEO of OGUN Security Research and Strategic Consulting LLC and Professor of Cybersecurity. A national security scholar, he advises global intelligence and policy bodies on cyber defense architecture, AI governance, and the strategic exposure of emerging economies in the agentic AI era.

Comments